by Manfred Mensch, SAP

Today’s complex IT landscapes must support many business users working concurrently with various applications. Software developers are also faced with diverse expectations for program performance: While users demand short response times and a predictable scaling behavior when the amount or the size of processed objects grows, IT administrators want minimal resource consumption so that the required hardware is as cost-efficient as possible. When these expectations are not met, or conflict with each other, developers must find a balanced way to optimize application performance. This requires a systematic, fact-based strategy to identify the parts of the software where most of the runtime is spent or that consume most of the resources, especially memory.

So how do you apply this strategy in your ABAP-based SAP environment? You must start with a complete and functionally correct application running on a test system with a representative set of test data. Then, to measure the application, you need a monitoring tool that can indicate whether the program’s performance needs optimization and whether there are bottlenecks related to runtime or to memory consumption. Such a monitoring tool can also detect issues linked to communication with external resources. For a detailed analysis, you need a profiling tool to examine events — such as instructions, statements, and calls to processing blocks — that are executed as the application runs, and to record their number, runtime (inclusive as well as exclusive), memory consumption, and call stack. This profile information pinpoints the critical — that is, the most expensive — parts of the application coding.

These measurement and analysis tools are readily available to SAP customers as part of all currently supported releases of SAP NetWeaver Application Server (SAP NetWeaver AS) ABAP. Transaction STATS1 serves as the monitoring tool, while transaction ST052 is helpful for problems caused by communication with external agents. This article looks at the profiling tool included with SAP NetWeaver AS ABAP: transaction SAT, the ABAP Trace tool (also known as Runtime Analysis).3 It shows you how to enable the recording of ABAP profiles (also known as traces) and how to analyze the results to find your application’s performance bottlenecks and resource consumption hotspots.

Enabling ABAP Profiling

By default, ABAP profiling is switched off, so that the system’s performance is not impaired. To enable profiling, access the ABAP Trace tool via transaction code SAT and configure the profile recording.

Configuring the Recording

Configure the profile recording on the Measure tab of the SAT start screen, shown in Figure 1.

Copy the DEFAULT measurement settings variant to a new variant (named SAP_INSIDER in the example) and click on the Change button (![]() ). On the resulting screen, change the settings to establish:

). On the resulting screen, change the settings to establish:

- How you want to measure, using the Duration and Type tab (Figure 2)

- What you want to analyze, using the Statements tab (Figure 3)

- Where you want to measure, using the Program Parts tab (Figure 4)

On the Duration and Type tab, you specify how the measurements will be performed. The settings you choose depend on your unique requirements, and you must weigh the pros and cons of each against your needs. The aggregation setting is the most important decision: Selecting None creates one separate record for each executed event. This setting includes the call hierarchy and enables a very detailed investigation, but creates a potentially huge set of event records (the profile or trace) along with associated disadvantages, such as large overhead during profiling and sluggish analysis. Monitoring of memory consumption requires non-aggregated profiling and adds further significant overhead, so use this setting judiciously. The Per Call Position setting, on the other hand, summarizes data for identical events triggered from the same ABAP source code location into one record. For getting an initial impression of an application’s performance, the advantages of the smaller number of event records resulting from the Per Call Position setting far outweigh the associated loss of detail. The Explicit Switching On and Off of Measurement option enables you to restrict the profiling to a part of your application, such as a user interaction that was identified as expensive by its statistics records.

The Statements tab is where you choose the events that you want to record. For a first overview, select only the Processing Blocks. Subsequent more thorough investigations may require event records for further statements. If you include operations on internal tables, you can improve the resulting information by selecting the Determine Names of Internal Tables checkbox on the Measure tab of the SAT start screen, which enables SAT to record the names of internal tables as declared in the ABAP coding instead of using memory object IDs, such as IT_<NN>.

If you already have a suspicion about your application’s hotspot, you can identify this on the Program Parts tab by choosing Limitation on Program Components and restricting the event recording to the relevant dedicated source code component, such as a specific class or even a particular method. For the initial evaluation, profiling with No Limitation of the Measurement provides a comprehensive view.

Once the measurement settings variant has been defined and saved, you can reuse it for any number of profile recordings. Note that if you name a variant DEFAULT, SAT will use it on startup.

Profiling the Application

With the measurement settings defined, you are ready to record the ABAP profile, which requires that you run the application. Transactions, programs, and function modules are conveniently profiled from the Measure tab of the SAT start screen by clicking on the Execute button. This will start a new internal session with its own logical unit of work and preserves the SAT internal session. If you selected the Eval. Immediately checkbox on the Measure tab of the SAT start screen, trace evaluation begins immediately after the application completes and control returns to SAT. If you chose the Explicit Switching On and Off of Measurement option on the Duration and Type tab during the variant configuration, profiling starts only when you enter /ron in the OK code field and stops when you enter /roff.

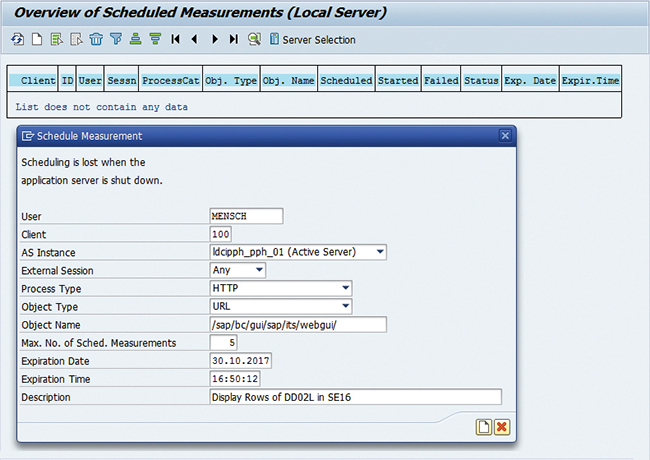

To analyze browser-based applications or other non-SAP GUI applications, you need to schedule the profile recording on the Measure tab of the SAT start screen by clicking on Schedule under For User/Service. This scheduling option establishes a set of criteria (Figure 5) that will all need to be fulfilled by requests arriving in the ABAP back end for the kernel to start ABAP profile recording. The criteria include:

- User: Name of the back-end user

- Client: Client where the session is executed

- AS Instance: ABAP application server instance where profiling will start

- External Session: Number of the external session

- Process Type: Process category (Dialog, Update, RFC, or HTTP, for example)

- Object Type: Type of object to be measured (such as transaction, report, or URL)

- Object Name: Name of the object to be measured

If you are unsure about appropriate values for some criteria, leave them empty or select Any.

There are further criteria to control the scheduling itself. With Max. No. of Sched. Measurements, you can set an upper limit for the number of measurements that can be started. With Expiration Date and Expiration Time, you control how long the scheduling is valid. Note that if you selected the Explicit Switching On and Off of Measurement option on the Duration and Type tab during the variant configuration, it has no impact on a scheduled profile.

Keep in mind that profiling is specific to the application server instance. Ensure that the program runs on the instance where you have activated the recording (or activate profiling on all application server instances). To obtain reproducible profiles with good data quality, execute the application a few times before recording a profile. These pre-runs will fill buffers and caches on all components that are involved in the application. Then the profile recorded during the measurement run will not contain any initialization overhead, which usually is not relevant.

Note that, depending on the measurement settings, profiling can significantly impair the application’s runtime. During analysis, SAT tries to correct for the overhead, but times taken from an ABAP profile should not be used for any other purposes.

Analyzing the ABAP Profile

While recording the profile, transaction SAT collects the event records into a file in the application server instance’s file system. During the first evaluation, the records are reformatted and the profile is stored in the database. From then on, the full analysis capabilities of transaction SAT are available. Copious profiling leads to a huge number of event records whose initial formatting for storage in the database takes a long time. Additionally, analyzing large ABAP profiles is slow and tedious.

The Evaluate tab on the SAT start screen (Figure 6) lists available profiles with some of their metadata:

- Status: Status of the measurement

- Measurement Date: Date of the recording

- Measurement Time: Start time of the recording

- Deleted On: Date when the measurement will be deleted (the default is the measurement date plus 50 days)

- Description: Measurement’s description

- Name of Trace Object: Object whose execution was profiled

- Trace User: User who recorded the profile

- Runtime: Total runtime in microseconds (µs)

- Aggregation: Aggregation level of the profile

- System: SAP system where the profile was recorded

Clicking on the Expert Mode button (![]() ) in the toolbar displays further metadata on the measurements. The most helpful additional information is % Internal, which is the relative amount of time spent within the ABAP application server instance. This information helps determine whether optimizations are most promising in the local ABAP code or whether database accesses or remotely called functions, which are considered external, must be improved first.

) in the toolbar displays further metadata on the measurements. The most helpful additional information is % Internal, which is the relative amount of time spent within the ABAP application server instance. This information helps determine whether optimizations are most promising in the local ABAP code or whether database accesses or remotely called functions, which are considered external, must be improved first.

To display a profile, double-click on the corresponding line in the list. Then, before starting a detailed investigation, click on the funnel icon (![]() ) to adjust the display filter so that event records related to system programs (field TRDIR-RSTAT has the value “S”) are shown. The display filter can also be reached from the SAT main menu (Utilities > Filter Default).

) to adjust the display filter so that event records related to system programs (field TRDIR-RSTAT has the value “S”) are shown. The display filter can also be reached from the SAT main menu (Utilities > Filter Default).

Profile analysis is supported by several tools included with transaction SAT that address specific questions. Here, we look at the Hit List tool, the Processing Blocks tool, the Profile tool, and the Times tool, which are the most useful for our purposes. Most features of the Call Hierarchy tool are available in the Processing Blocks tool with better usability. Issues that may show up in the DB Tables tool are much better investigated with an SQL trace recorded in transaction ST05.

The Hit List Tool

The Hit List tool (Figure 7) aggregates events by calling position4 and in its default layout shows:

- Hits: Number of calls to the event from identical calling positions

- Gross [microsec]: Total gross runtime of the event in microseconds (µs)

- Net [microsec]: Total net runtime of the event in microseconds (µs)

- Gross [%]: Total relative gross runtime of the event as a percentage of overall runtime

- Net [%]: Total relative net runtime of the event as a percentage of overall runtime

- Statement/Event: Name of the event (this field can be divided into Operation and Operand via the Representation Statement/Event button (

) in the list’s toolbar

) in the list’s toolbar - Program Called: Name of the program in which the event was executed

- Calling Program: Name of the program that called the event

An event’s gross time (also called inclusive time) is the difference between its start and end times. The net (or exclusive) time is calculated by subtracting all gross times of directly nested subevents.

By default, the Hit List entries are sorted by net time descending, which is adequate when searching for traditional tuning approaches on the algorithmic level. To find the most expensive processing blocks or program flow branches, re-sort the list by gross time descending and analyze from the top downward. Verify that the events are required for the application and that all their executions are necessary: The most efficient optimization strategy eliminates everything that is not required for the application’s business objectives. Finally, sort the list by the number of calls descending. Investigate events that are called often and consider the use of mass-enabled calls if many executions are indeed necessary.

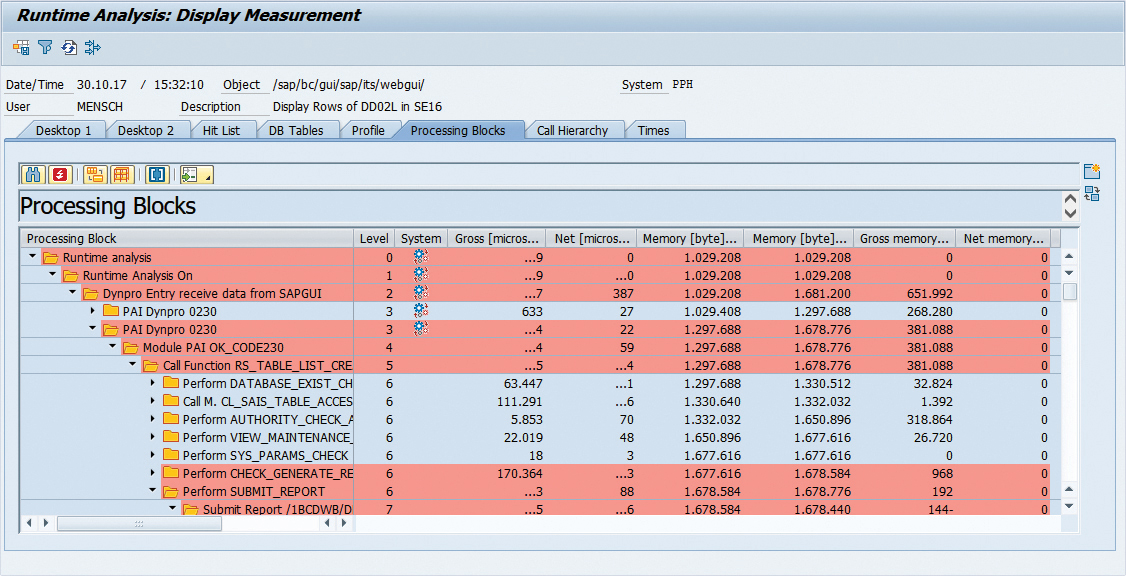

The Processing Blocks Tool

The Processing Blocks tool (Figure 8) is available only for profiles recorded without aggregation (by selecting None on the Duration and Type tab during configuration of the measurement settings). The tool restricts the complete call hierarchy with all events to modularization units — excluding other events provides a good overview of the application’s program flow. The tool displays the following columns:

- Processing Block: Tree-like hierarchical display of the modularization units

- Level: Call stack level — that is, nesting depth of the processing block

- System: Icon (

) to indicate system event

) to indicate system event - Gross [microsec]: Gross runtime of the modularization unit in microseconds (µs)

- Net [microsec]: Net runtime of the modularization unit in microseconds (µs)

If the trace was recorded with memory consumption (by setting the Measure Memory Use option on the Duration and Type tab when configuring the recording), the Memory Usage On/Off button (![]() ) shows further fields:

) shows further fields:

- Memory [byte]: Beginning of the processing block: Memory used when entering the modularization unit

- Memory [byte]: End of the processing block: Memory used when exiting the modularization unit

- Gross memory increase [byte] in the processing block: Difference of the values in the two previous columns

- Gross memory [%] increase in the processing block: Gross memory increase divided by initial memory usage

While the call hierarchy of the processing blocks can be expanded manually level by level, the Critical Processing Blocks button (![]() ) is more convenient: In a popup, simply select a criterion (percentage of runtime [gross or net] or memory increase) and specify a lower limit as a percentage (the default is 5% of the overall total gross time, or of the memory consumption at the beginning of each processing block). Processing blocks that fulfill the condition are identified as critical. They are shown with a red background, and all the events leading to them will be expanded. Depending on the criterion used to define Critical Processing Blocks, the following questions can be answered:

) is more convenient: In a popup, simply select a criterion (percentage of runtime [gross or net] or memory increase) and specify a lower limit as a percentage (the default is 5% of the overall total gross time, or of the memory consumption at the beginning of each processing block). Processing blocks that fulfill the condition are identified as critical. They are shown with a red background, and all the events leading to them will be expanded. Depending on the criterion used to define Critical Processing Blocks, the following questions can be answered:

- Percentage of total runtime (gross): What are the critical program flow paths where a substantial fraction of the time is spent? If any of them are superfluous, they must be eliminated by not calling the modularization unit at their root. This will save all the gross time of this call and shorten the application’s runtime by the corresponding amount of time.

- Percentage of total runtime (net): Which processing blocks are directly responsible for a considerable amount of time? If they cannot be removed, they or their caller must be tuned to save as much time as possible.

- Memory increase in processing block: Which processing blocks lead to a significant increase in memory consumption? Verify that the amount of processed data is reasonable and that the data structures are as lean as possible.

The Confine to Subarea button (![]() ) restarts the Processing Blocks tool with the selected event record as the root node. You can then focus on a smaller sub-branch of the application’s call hierarchy.

) restarts the Processing Blocks tool with the selected event record as the root node. You can then focus on a smaller sub-branch of the application’s call hierarchy.

The Profile Tool

In the Profile tool (Figure 9), events are partitioned based on their class. Alternatively, events can be categorized by package, component, or program with the Other Hierarchy button (![]() ). At each level, further expansion according to the remaining categories is possible from the context menu. The available fields include:

). At each level, further expansion according to the remaining categories is possible from the context menu. The available fields include:

- Profile: Tree-like display of the hierarchy of categories

- Selection: Checkbox to mark individual categories for subsequent navigation via the Display Subarea button (

)

) - Number: Total number of calls to events in the category

- Net [microsec]: Total net runtime of events in the category in microseconds (µs)

- Net [%]: Total net runtime of events in the category as a percentage

These fields help to find the areas that receive most of the calls or where most of the net time is incurred. Focus your optimization on these areas.

The Times Tool

The Times tool (Figure 10) lists events in chronological order with the following event data displayed in the columns:

- Index: Running number

- Statement/Event: Name of the event — this field can be divided into Operation and Operand and augmented by Program Called via the Representation Statement/Event button (

)

) - Gross [microsec]: Gross runtime of the event in microseconds (µs)

- Net [microsec]: Net runtime of the event in microseconds (µs)

- Total User Times: Non-system net time

- System Time in Tot.: System net time (caused by programs for which the field TRDIR-RSTAT has the value “S”)

The Times tool is especially helpful for non-aggregated profiles, where it enhances the Hit List results by revealing the individual event records that contribute to an entry with hits larger than one.

Events with a large net time may be candidates for optimizing their algorithms or data structures. If the gross time of an event is high, check whether all nested events are required and optimized.

The Expert Mode button (![]() ) subdivides the net times (user and system) into times used in modularization units, in the ABAP database interface (internal), in the database server (external), for internal table operations, for dynpro operations, and for other events.

) subdivides the net times (user and system) into times used in modularization units, in the ABAP database interface (internal), in the database server (external), for internal table operations, for dynpro operations, and for other events.

Enhancing the Analysis

Transaction SAT supports navigation between its analysis tools so that the investigation of a suspicious event found in one tool can be enhanced in another tool. Let’s look at the most useful ways the Hit List, Processing Blocks, Profile, and Times tools can be used together to refine the analysis.

For a Hit List entry, the subset of all contributing trace records can be displayed in the Times tool — either by clicking on the Display Subarea button (![]() ) or by selecting the appropriate item from the entry’s context menu. This is valuable for aggregated entries (Hits > 1 in the Hit List) — for those, it is not obvious from the Hit List whether the time is uniformly distributed over the contributing individual events or whether a few of the events account for most of the time. With the Times tool, this can easily be discerned, provided that the profile was recorded without aggregation.

) or by selecting the appropriate item from the entry’s context menu. This is valuable for aggregated entries (Hits > 1 in the Hit List) — for those, it is not obvious from the Hit List whether the time is uniformly distributed over the contributing individual events or whether a few of the events account for most of the time. With the Times tool, this can easily be discerned, provided that the profile was recorded without aggregation.

Similarly, for an entry selected in the Hit List, Processing Blocks, or Times tools, navigation to the event’s position in another tool is supported by the Position Cursor button (![]() ) or by using the context menu. If the navigation starts in the Hit List tool at an aggregated record corresponding to multiple single records, then all of these individual records will be offered for selection in a popup. Pick the record whose position in the other tool you want to see.

) or by using the context menu. If the navigation starts in the Hit List tool at an aggregated record corresponding to multiple single records, then all of these individual records will be offered for selection in a popup. Pick the record whose position in the other tool you want to see.

The set of records contributing to a category in the Profile tool can be displayed in the Hit List or Times tools via the category’s context menu. To do this for multiple categories, mark their checkboxes in the Selection column and press the Display Subarea button (![]() ). Double-clicking on an individual category will show its records in the Hit List tool.

). Double-clicking on an individual category will show its records in the Hit List tool.

Double-clicking on an event record in the Hit List, Processing Blocks, or Times tool navigates to the source code position. This provides context information and shows how the statement was programmed in ABAP. This is especially helpful for calls to modularization units where the parameters passed to the interface are relevant, but not included in the profile.

Checking Scalability by Comparing ABAP Profiles

An application’s total runtime is the sum of the execution times of the invoked events. Scaling relates this total runtime to the amount of processed data or to the size of the handled objects. The overall relation should be (quasi)linear or better, and must never be quadratic or worse. For small data sets (such as those typically available in development and test systems), the impact of contributions that scale worse than (quasi)linear may not be visible in the total runtime, because they are masked by the vast majority of events that have acceptable scalability. As soon as the data volume or the object size increases, the few events with poor scaling behavior dominate the application’s runtime to a catastrophic extent.

A conclusive scalability check with a small data set relies on an event-by-event comparison of two ABAP profiles for distinct runs of the same application. Let’s say that N1 and N2 are the respective amounts of processed data. Choose N1 and N2 so that their ratio N2 / N1 is larger than 10, and so that the runtimes of the relevant events are above 100 µs. (Otherwise statistical fluctuations may make the interpretation less reliable.) Events where the ratio of the net times is significantly above N2 / N1 probably have poor scaling behavior. This approach implicitly assumes a third measurement with N0 = 0 and zero runtime. For this reason, N1 should be large enough to make any overhead independent of the amount of data negligible.

To start the comparison, mark two profiles on the Evaluate tab of the SAT start screen and click on the Compare Measurements button (![]() ). The Hit List comparison (Figure 11) shows the following columns:

). The Hit List comparison (Figure 11) shows the following columns:

- Code: Indicator showing which of the traces contains the event — T1 indicates that the event occurs only in the first trace, T_2 indicates that the event occurs only in the second trace, and T12 indicates that the event occurs in both traces (T12 must be predominant for a meaningful comparison)

- Event Class: Category of the event

- Event Type: Type of the event

- Name of Event: Name of the event

- Net 2/1: Ratio of the event’s net runtimes between the second and the first trace

- Net 2: Event’s net runtime in the second trace in microseconds (µs)

- Net 1: Event’s net runtime in the first trace in microseconds (µs)

- Number 2/1: Ratio of the event’s numbers of calls between the second and the first trace

- Number 2: Event’s number of calls in the second trace

- Number 1: Event’s number of calls in the first trace

- Gross 2/1: Ratio of the event’s gross runtimes between the second and the first trace

- Gross 2: Event’s gross runtime in the second trace in microseconds (µs)

- Gross 1: Event’s gross runtime in the first trace in microseconds (µs)

- Program Called: Name of the program where the event was executed

- Caller: Name of the calling program

- Offset: Control block offset of the calling position

The default sort order descending by Net 2/1 puts the events with potentially bad scaling behavior at the top of the list. These events need to be investigated very carefully. If there is the slightest probability that they may be executed with a large amount of data, they must be optimized. Otherwise, there will be a high risk of very long application runtimes due to nonlinear scaling behavior.

For ABAP applications, nonlinearity is almost always related to nested operations on internal tables. It can be caused by either an action that is inherently slow (such as sorting an internal table) and executed too often, or by an operation that must be called very often (such as reading a line out of an internal table) but does not run as fast as possible. To resolve the first situation, you must adapt your application’s logic so that the slow action is called only very rarely. In the second case, the technical implementation of the frequently executed statement must be tuned by changing the data structure or the algorithm. Usually this is more straightforward than redesigning the application’s logic.

ABAP Profiling in Eclipse

Starting with support package 04 for SAP NetWeaver 7.31, there is an Eclipse-based integrated development environment, which is the successor to the SAP GUI-based transactions for ABAP development.5 The Eclipse-based ABAP development tools include an ABAP Profiling toolset with recording and analysis capabilities similar to those of transaction SAT. In addition, a Call Timeline displays the event records in a graphical call tree (Figure 12). The width of the bars represents the duration of the corresponding events. Coloring is according to a set of configurable rules either for the call type or for the object name. The lower panel supports zooming into a specific time interval of the profile, displayed in the upper panel. Resting the mouse over an event shows details for that event in a popup.

With this graphical approach, it is very easy to spot suspicious patterns that repeat or that have a large nesting depth. In addition, navigation from the Call Timeline to other tools, such as the Hit List or the Call Sequence, or to the ABAP source code, supports further investigation or optimization.

Conclusion

Transaction SAT provides profiling functionality that helps you pinpoint hotspots in your ABAP-based applications and find the most promising strategy for optimizing performance. It enables you to collect the information you need to answer critical questions, such as: Which parts of the coding are executed frequently and how much time is spent there? Where is most of the memory consumed? With the answers to these questions, you can identify problem areas in your ABAP code and derive an approach that balances the application performance requirements of end users and IT administrators.

1 For an introduction to transaction STATS, refer to my article “Identifying Performance Problems in ABAP Applications” in the January-March 2016 issue of SAPinsider (SAPinsiderOnline.com). [back]

2 This tool is described in my article “Track Down the Root of Performance Problems with Transaction ST05” in the July-September 2013 issue of SAPinsider (SAPinsiderOnline.com). [back]

3 Transaction SAT is the successor of transaction SE30, and is available as of enhancement package 2 for SAP NetWeaver 7.0. [back]

4 For profiles recorded with aggregation Per Call Position, this was done during recording. If aggregation None was used, the Hit List summarizes the individual event records on the fly. [back]

5 Learn more about the Eclipse-based ABAP development tools in the article “A Guide to SAP’s Development Environments for SAP HANA and the Cloud” in the January-March 2015 issue of SAPinsider (SAPinsiderOnline.com) and at https://tools.hana.ondemand.com/#abap. [back]

Manfred Mensch (manfred.mensch@sap.com) joined SAP in 2000 and has been a member of the Performance and Scalability team since 2006. His responsibilities include supporting his colleagues in SAP’s Products and Innovation organization by coaching and consulting on performance during the design, implementation, and maintenance phases of their software. Currently, Manfred is focusing on the development of training programs and on performance analysis tools.